Table of Contents

- Models

- Keras Layers

- Video Tutorial

1. Models

- Keras provides a complete framework to create any type of neural network it supports, simple to the very large and complex neural network model.

- We have two alternatives sequential and functional API. The sequential API allows us to create models layer by layer for most problems. It is limited in that it does not allow us to create models that share layers or have multiple inputs or outputs.

Alternatively, the functional API allows us to create models that have a lot more flexibility as we can easily define the models where layers connect to more than just previous and next layers. No layers. Every Kerasmodel is a composition of layers and represents the corresponding layer in the proposed neural network model.

2. Keras Layers

- We have dense, dropout, Activation , reshape, and permute.

- If we want to work with special models like CNN or RNN, we have to choose the specific layers. We can create a complex neural network using those prebuilt layers.

- Now Keras modules in the section we have grouped backend utils data set and application modules of Keras.

Dense layer

- We are going to focus on the commonly used layers in Keras. The first one is dense layer.

- A dense layer is just a regular layer of a neural network. Each neuron receives input from all the neurons in the previous layer, thus densely connected.

- They are also known as a fully connected layers to work with the dense layers. First, we are going to import a sequential model from Keras as we are using Tensorflow Version 2.We have to use TF.keras instead of keras.

- This will be applicable for all the modules, layers, and modules. Then we will import the dense layer and the plot module to work with the plot module.

- Now we will create a new model using sequential API. It will create a new dense layer with sixteen neurons in the first hidden layer. As this is the first layer we have to mention the input shape.

- This shape means we have eight input parameters. This model summary will return the output structure of our model. And finally, this plot_model function will visualize the model.

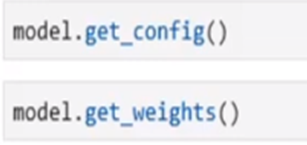

Get_config:

we can know details about the model just using the get_config function, it will display all the parameters of dense layer. It will tell us the width of the layers and the biases.

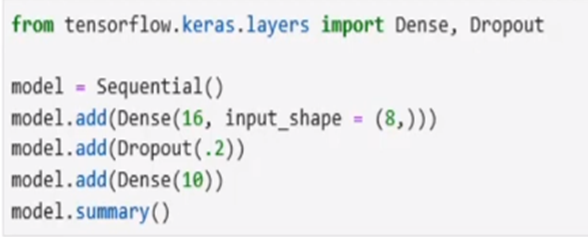

Dropout:

- Drop out is a technique used to prevent a model from overfitting.

- First, we import dropout from Keras . Now we will create a model with eight inputs and a hidden layer of 16 neurons using a dense layer.

- Then we will create the dropout regularisation with a 0.2 .it will reduce 20 percent input at the time of model training.

Flatten:

- Flatten is used to flatten the input data. Flatten layers are used when we get a multidimensional output and we want to make it linear to pass it on to our dense layer.

- So first we will import the required dense and flatten layer from the Keras. We will create a dense model having two inputs and 16 outputs which will be applied independently for the eight steps.

- The input and output will be in the same shape nine, eight, and two as in our hidden layer. We have 16 neurons. The output of the hidden layer will be eight and 16.

- Now, in the flattened layer, the input is none. Eight and 16, the output will be none. And 128 we get this output just multiplying. Eight and 16.

- This output will be transferred to the input of the dense layer. And as this dense layer contains 10 neurons, so the output will be the dimension of 10.

Permute:

Permute is used to change the shape of the input using a specific pattern.

First, we will call the Permute from the Keras. This dense model will contain two inputs and 16 outputs, which will be applied independently for eight steps. This permutes interchanges first and the second dimension of the input.

The input will be two and the output will be 16 and this will be applied independently for the eight steps. So the output of Ben Slier will be none. Eight and 16. The input of the permute layer will be the same.

Convolution neural network

- Now let’s look into a complex sequential model with convolution, pooling followed by dense and flatten layers first we are going to import our required model layers and modules from Keras.

- Convolutional Layer has an input layer consisting of images of size 28 by 28 with one channel a convolution layer with 32 filters with kernel size 5×5 a second conversation layer with the same 32 filters with kernel size 5×5 after that a max pool layer with the pool size 2 x2 are flattened layer finally our dense output with two nodes.

- We already know that the input shape is 28 by 28 with one channel the output shape of the first convolution layer is 24 x 24×32.

- This 32 is coming from that number of filters in the first layer

- Now in max pool 2d layer, we are using a pool size of 2 by 2 so the output shape will be half of the input so the output will be 12 x12 x32

- Then this will send to a flattened layer that will flat in the input so the output will be a flattened vector This passed to the output dense layer of size 1000.

Video Tutorial